“Capabilities like AutoGen are poised to fundamentally transform and extend what large language models are capable of. This is one of the most exciting developments I have seen in AI recently.”

Doug Burger, Technical Fellow, Microsoft

It requires a lot of effort and expertise to design, implement, and optimize a workflow that can leverage the full potential of large language models (LLMs). Automating these workflows has tremendous value. As developers begin to create increasingly complex LLM-based applications, workflows will inevitably grow more intricate. The potential design space for such workflows could be vast and complex, thereby heightening the challenge of orchestrating an optimal workflow with robust performance.

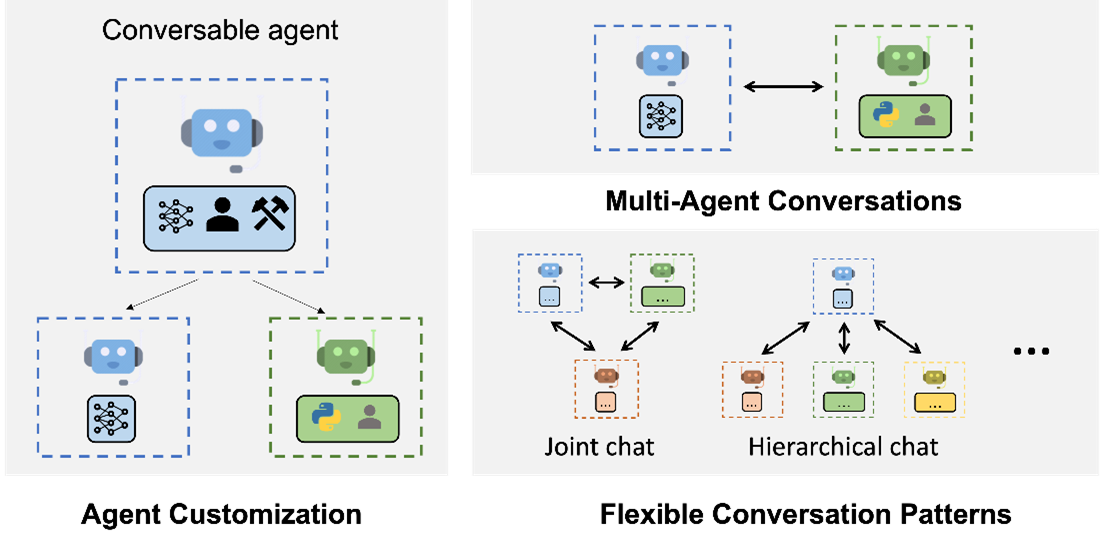

AutoGen is a framework for simplifying the orchestration, optimization, and automation of LLM workflows. It offers customizable and conversable agents that leverage the strongest capabilities of the most advanced LLMs, like GPT-4, while addressing their limitations by integrating with humans and tools and having conversations between multiple agents via automated chat.

Spotlight: On-demand video

With AutoGen, building a complex multi-agent conversation system boils down to:

- Defining a set of agents with specialized capabilities and roles.

- Defining the interaction behavior between agents, i.e., what to reply when an agent receives messages from another agent.

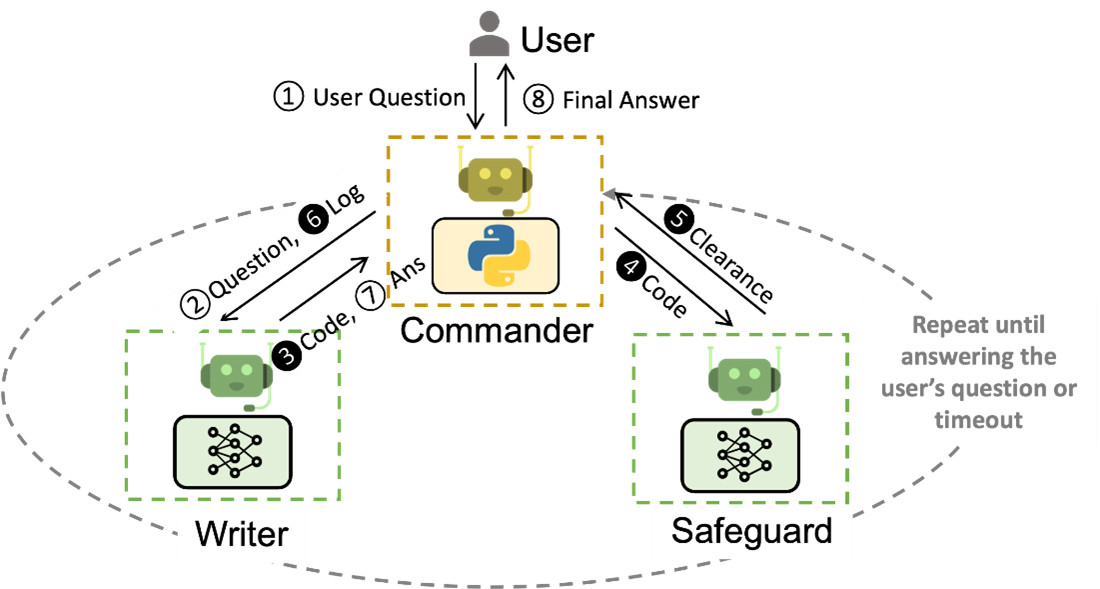

Both steps are intuitive and modular, making these agents reusable and composable. For example, to build a system for code-based question answering, one can design the agents and their interactions as in Figure 2. Such a system is shown to reduce the number of manual interactions needed from 3x to 10x in applications like supply-chain optimization (opens in new tab). Using AutoGen leads to more than a 4x reduction in coding effort.

(opens in new tab)

(opens in new tab)Capable, conversable, and customizable agents – integrating LLMs, humans, and tools

AutoGen agents have capabilities enabled by LLMs, humans, tools, or a mix of those elements. For example:

- One can easily configure the usage and roles of LLMs in an agent (automated complex task solving by group chat) with advanced inference features (e.g., optimize performance with inference parameter tuning).

- Human intelligence and oversight can be achieved through a proxy agent with different involvement levels and patterns (e.g., automated task solving with GPT-4 + multiple human users (opens in new tab)).

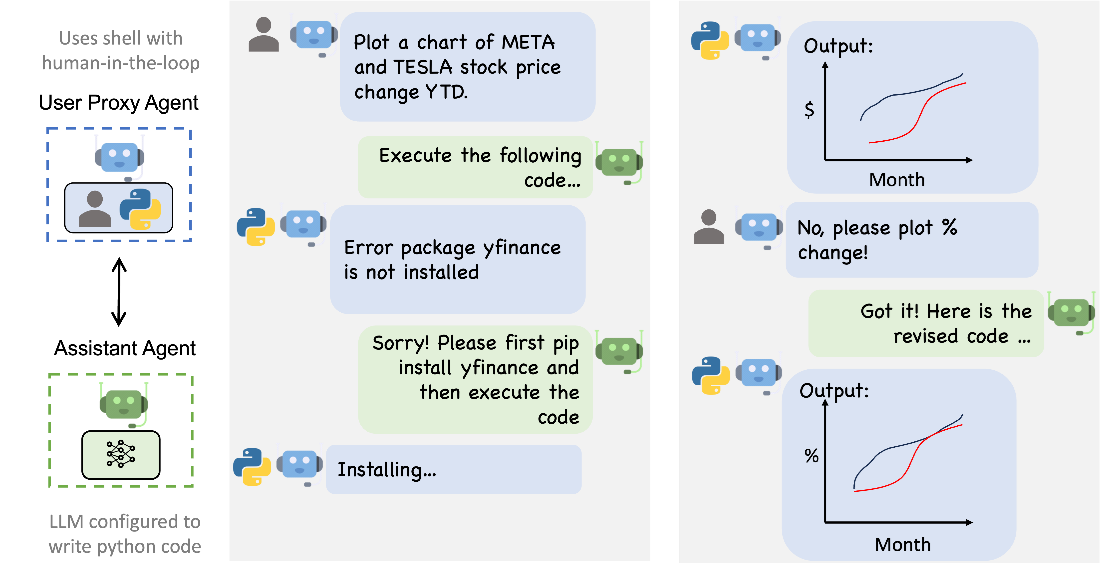

- The agents have native support for LLM-driven code/function execution (e.g., automated task solving with code generation, execution and debugging (opens in new tab), use provided tools as functions (opens in new tab)).

One straightforward way of using built-in agents from AutoGen is to invoke automated chat between an assistant agent and a user proxy agent. As an example (Figure 3), one can easily build an enhanced version of ChatGPT + Code Interpreter + plugins, with a customizable degree of automation, usable in a custom environment and embeddable in a bigger system. It is also easy to extend their behavior to support diverse application scenarios, such as adding personalization and adaptability based on past interactions (e.g., automated continual learning (opens in new tab), teach agents new skills (opens in new tab)).

The agent conversation-centric design has numerous benefits, including that it:

- Naturally handles ambiguity, feedback, progress, and collaboration.

- Enables effective coding-related tasks, like tool use with back-and-forth troubleshooting.

- Allows users to seamlessly opt in or opt out via an agent in the chat.

- Achieves a collective goal with the cooperation of multiple specialists.

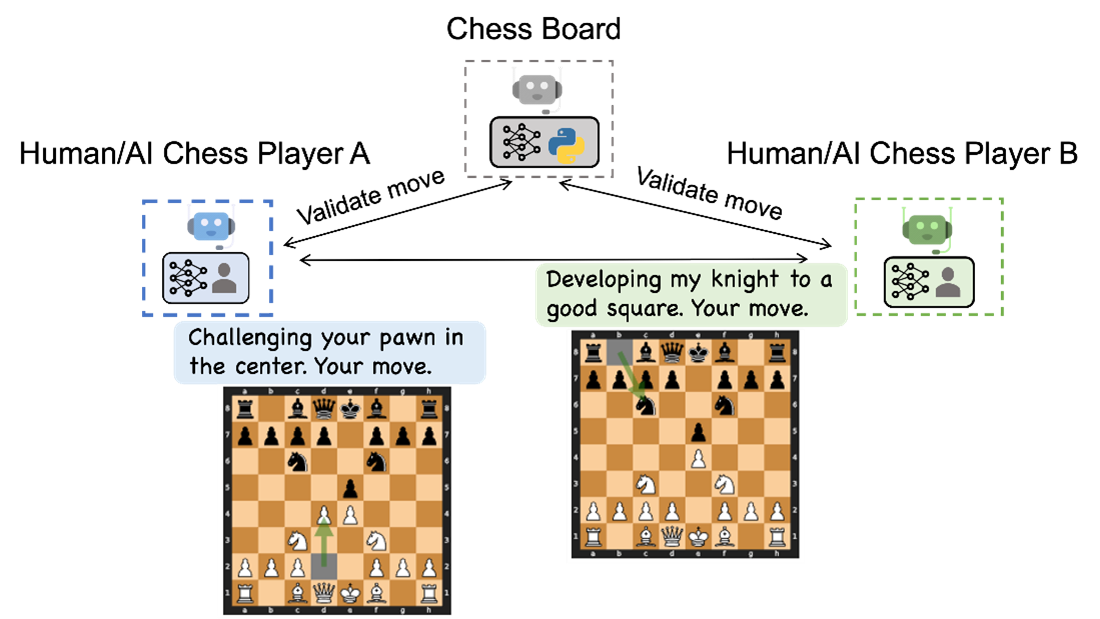

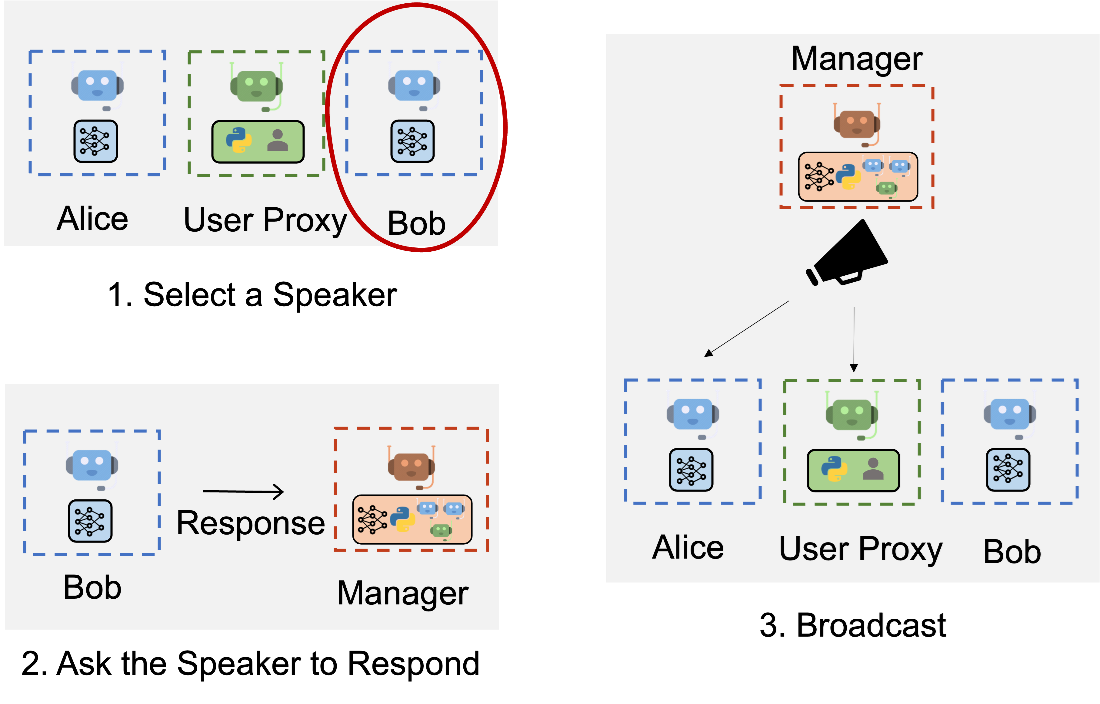

AutoGen supports automated chat and diverse communication patterns, making it easy to orchestrate a complex, dynamic workflow and experiment with versatility. Figure 4 illustrates a new game, conversational chess (opens in new tab), enabled by AutoGen. Figure 5 illustrates how AutoGen supports group chats (opens in new tab) between multiple agents using another special agent called the “GroupChatManager”.

(opens in new tab)

(opens in new tab) (opens in new tab)

(opens in new tab)Getting started

AutoGen (opens in new tab) (in preview) is freely available as a Python package. To install it, run

pip install pyautogenYou can quickly enable a powerful experience with just a few lines of code:

import autogen

assistant = autogen.AssistantAgent("assistant")

user_proxy = autogen.UserProxyAgent("user_proxy")

user_proxy.initiate_chat(assistant, message="Show me the YTD gain of 10 largest technology companies as of today.")

# This triggers automated chat to solve the taskCheck examples for a wide variety of tasks: https://microsoft.github.io/autogen/docs/Examples/AutoGen-AgentChat (opens in new tab).

Next steps:

- Use AutoGen in your LLM applications and provide feedback on Discord (opens in new tab)

- Read about the research:

AutoGen is an open-source, community-driven project under active development (as a spinoff from FLAML (opens in new tab), a fast library for automated machine learning and tuning), which encourages contributions from individuals of all backgrounds. Many Microsoft Research collaborators have made great contributions to this project, including academic contributors like Pennsylvania State University and the University of Washington, and product teams like Microsoft Fabric and ML.NET. AutoGen aims to provide an effective and easy-to-use framework for developers to build next-generation applications, and already demonstrates promising opportunities to build creative applications and provide a large space for innovation.

Names of Microsoft contributors:

Chi Wang, Gagan Bansal, Eric Zhu, Beibin Li, Li Jiang, Xiaoyun Zhang, Ahmed Awadallah, Ryen White, Doug Burger, Robin Moeur, Victor Dibia, Adam Fourney, Piali Choudhury, Saleema Amershi, Ricky Loynd, Hamed Khanpour, and Ece Kamar.