Heuristic algorithms, often referred to as heuristics, are tools used to approximate optimal algorithms to make faster and more efficient decisions. These are particularly useful in operational scenarios in production systems, such as determining which server a virtual machine should be assigned to or deciding whether data should be removed from a cache in a content delivery network.

However, cloud operators, who are responsible for designing and managing systems in production, often struggle to evaluate when and where their heuristics may underperform. This challenge can lead to over-provisioning the network and the inefficient use of available resources. Such practices can be costly and may result in the inability to meet customer demand.

To address this, we developed MetaOpt, a heuristic analyzer designed to enable operators to examine, explain, and improve heuristics’ performance before deploying them in critical, high-stakes environments. MetaOpt is unique because it not only compares algorithm performance but also provides insights into the underlying reasons for performance disparities between algorithms. It empowers operators and researchers to conduct “what-if” analyses, strategize how to combine heuristics in production, and understand why certain heuristics perform better in specific areas of the input space—the range of possible inputs that the heuristic may encounter.

Spotlight: Microsoft research newsletter

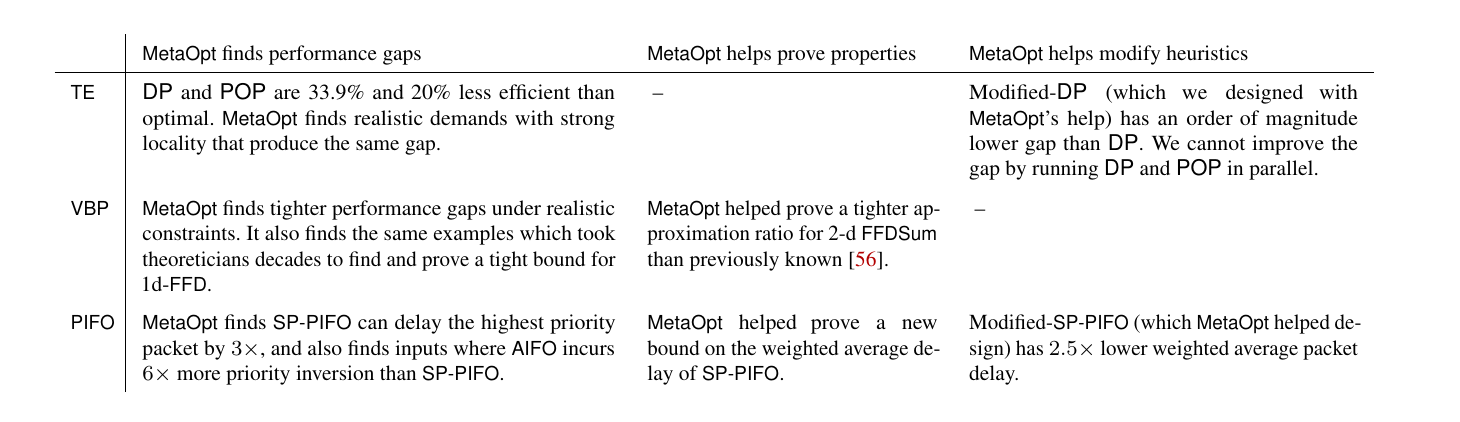

We demonstrate MetaOpt’s capability for heuristic analysis by studying heuristics from three domains: traffic engineering, vector bin packing, and packet scheduling. MetaOpt identifies large performance gaps, enables us to prove properties about these heuristics, and guides us in improving them. Table 1 summarizes the results.

Currently, MetaOpt helps Azure operators analyze heuristics in production and serves as a “helper for theorem proving.” For example, we used MetaOpt to establish a tighter bound for the “first fit decreasing” heuristic in vector bin packing, a challenge for theoreticians for over three decades. As a result, we don’t need to over-provision resources in a cloud environment, ensuring we always have sufficient servers to meet customer demand.

MetaOpt framework

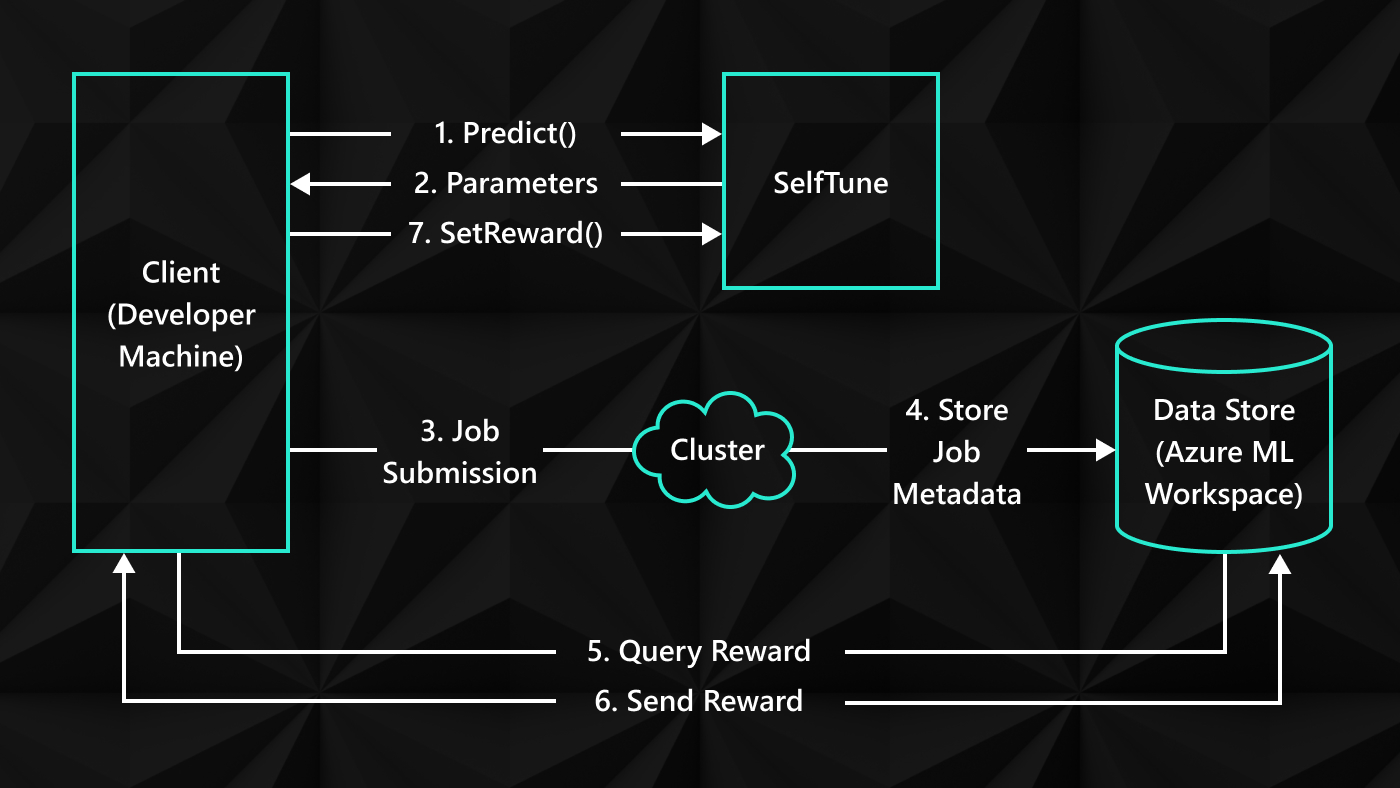

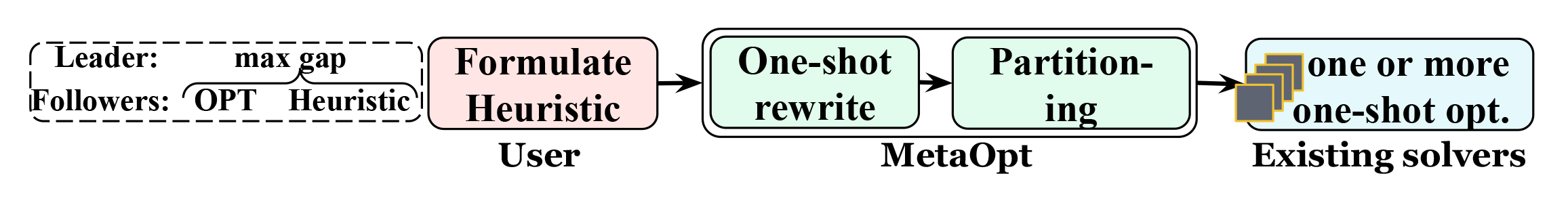

To use MetaOpt, users input the heuristic they want analyzed and either the optimal algorithm or another heuristic. MetaOpt efficiently translates these inputs into a solver format. It then finds performance gaps and the inputs that cause them. Recognizing that not all users are versed in optimization theory, we designed a higher-level abstraction for MetaOpt. This feature enables users to input their heuristics using a few simple building blocks and constrain the input space to what is relevant in practice. MetaOpt can then analyze decisions made by the heuristic that led to underperformance or identify input properties that caused the heuristic to make suboptimal choices. We illustrate the MetaOpt workflow in Figure 1.

Rooted in game theory concepts

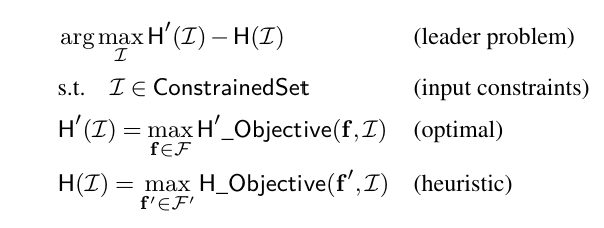

MetaOpt is based on Stackelberg games, a well-known class of leader-follower games in game theory. Here, the leader determines inputs for one or more followers, who must then optimize their outcomes based on these inputs. In the MetaOpt framework, the leader’s goal is to maximize the performance disparity between two algorithms (the followers) by deciding their inputs. The followers, representing the algorithms being compared, choose internal variables to optimize their outcomes. This, in turn, affects the leader’s results. We show this in Figure 2.

Looking ahead

MetaOpt marks a significant advance in the field of scalable, user-friendly analytical tools. It enables users to examine, understand, and explain differences in performance across competing algorithms. It also helps them improve those algorithms before deploying them in critical environments.

We began developing MetaOpt in early 2022 to address a specific need for heuristic analysis in our network’s traffic engineering solution. Since then, our focus has been on enhancing MetaOpt’s accessibility for users without a background in optimization theory. Currently, we are improving MetaOpt’s scalability and usability, and we are expanding the range of heuristics it supports. We plan to release it as an open-source tool at the USENIX Symposium on Networked Systems Design and Implementation (opens in new tab) (NSDI) conference, scheduled for April 16–18, 2024.

We believe that MetaOpt can significantly boost productivity for those studying or designing heuristics by serving as a risk-analysis engine and a tool for explainable AI and active learning. In the near future, we aim to publish papers on new MetaOpt applications and share our language for describing heuristics.

For more details, visit the MetaOpt webpage, and review our publications page for the latest developments.