微软研究院博客

| Shuaiwen Leon Song, Bonnie Kruft, Minjia Zhang, Conglong Li, Martin Cai, 和 Yuxiong He

Editor’s note, Sept. 28, 2023 – The founding collaborators list was updated to correct omissions and the scientific foundation model graph was updated to correct information. In the next decade, deep learning may revolutionize the natural sciences, enhancing our capacity to…

| DeepSpeed Team, Rangan Majumder, 和 Andrey Proskurin

Last month, the DeepSpeed Team announced ZeRO-Infinity, a step forward in training models with tens of trillions of parameters. In addition to creating optimizations for scale, our team strives to introduce features that also improve speed, cost, and usability. As…

| DeepSpeed Team 和 Andrey Proskurin

In the last three years, the largest trained dense models have increased in size by over 1,000 times, from a few hundred million parameters to over 500 billion parameters in Megatron-Turing NLG 530B (MT-NLG). Improvements in model quality with size…

Welcome to Research Focus, a new series of blog posts that highlights notable publications, events, code/datasets, new hires and other milestones from across the research community at Microsoft. Barun Patra, Saksham Singhal, Shaohan Huang, Zewen Chi, Li Dong, Furu Wei,…

| DeepSpeed Team, Rangan Majumder, 和 Junhua Wang

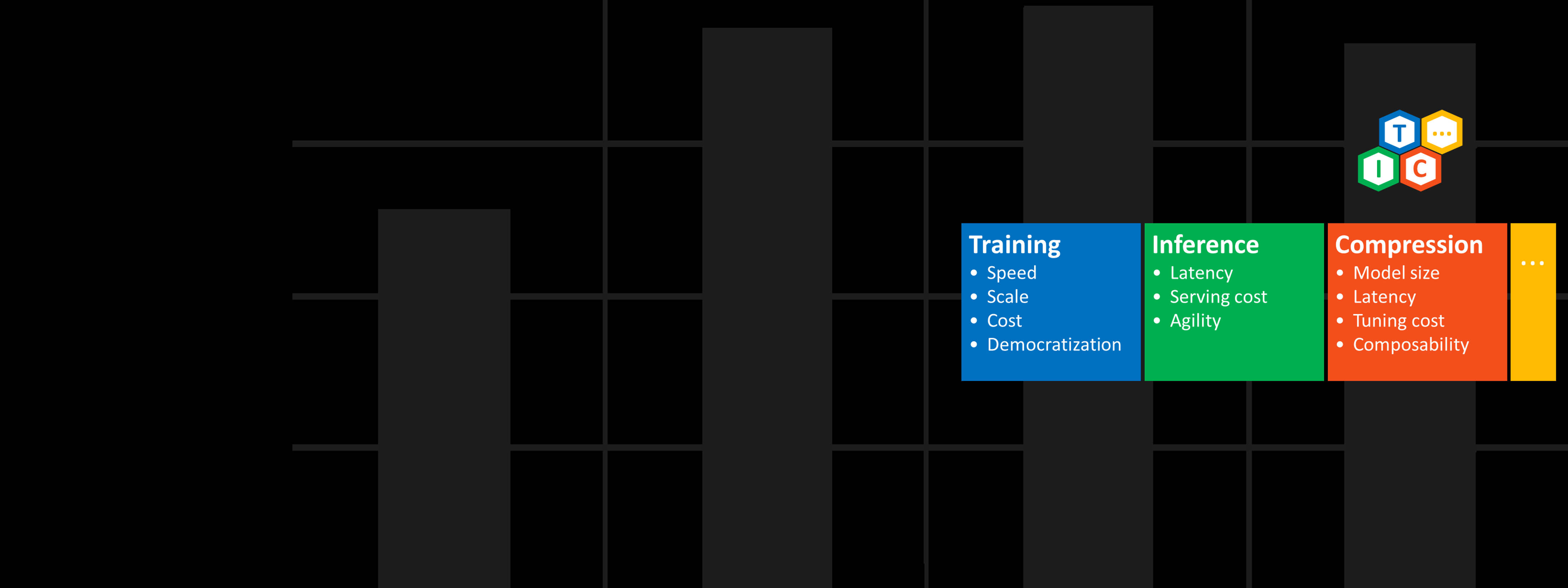

In February, we announced DeepSpeed, an open-source deep learning training optimization library, and ZeRO (Zero Redundancy Optimizer), a novel memory optimization technology in the library, which vastly advances large model training by improving scale, speed, cost, and usability. DeepSpeed has…

| DeepSpeed Team, Rangan Majumder, 和 Junhua Wang

In February, we announced DeepSpeed, an open-source deep learning training optimization library, and ZeRO (Zero Redundancy Optimizer), a novel memory optimization technology in the library, which vastly advances large model training by improving scale, speed, cost, and usability. DeepSpeed has…

| Ali Alvi 和 Paresh Kharya

We are excited to introduce the DeepSpeed- and Megatron-powered Megatron-Turing Natural Language Generation model (MT-NLG), the largest and the most powerful monolithic transformer language model trained to date, with 530 billion parameters. It is the result of a research collaboration…

Over the past 30 years, Microsoft Research has undergone a shift in how it approaches innovation, broadening its mission to include not only advancing the state of computing but also using technology to tackle some of the world’s most pressing…

| Corby Rosset

This figure was adapted from a similar image published in DistilBERT. Turing Natural Language Generation (T-NLG) is a 17 billion parameter language model by Microsoft that outperforms the state of the art on many downstream NLP tasks. We present a…