Tongue-Gesture Recognition in Head-Mounted Displays

Head mounted displays are often used in many situations where the hands of an user may be occupied or otherwise unusable due to situational or permanent movement impairments. Hands-free interaction is critical for making mixed reality applications versatile and accessible in head mounted displays. Traditionally, hands-free interaction relies on voice, which is not private and unusable in loud environments; or gaze, which is slow and demands attention. In this talk, I will propose a non-intrusive tongue gesture interface using sensors in head mounted displays that can be used either independently or in conjunction with gaze tracking to reduce its limitations. Then, I will share gesture recognition results as well as usability metrics from an experimental study and demonstrate a system combining tongue gestures with gaze tracking.

Speaker Bios

Tan Gemicioglu is a summer intern in the MSR Audio & Acoustics Research Group and an undergraduate student at Georgia Tech. At MSR, they investigated multimodal brain-computer interfaces and gesture interaction advised by Mike Winters and Yu-Te Wang. At Georgia Tech, they are advised by Thad Starner and Melody Jackson, studying passive haptic learning and movement-based brain-computer interfaces. Their primary research interests are in wearable interfaces assisting in communication and learning using physiological sensing and haptics.

- Séries:

- Microsoft Research Talks

- Date:

- Haut-parleurs:

- Tan Gemicioglu

- Affiliation:

- Georgia Tech

Taille: Microsoft Research Talks

-

Decoding the Human Brain – A Neurosurgeon’s Experience

Speakers:- Pascal Zinn,

- Ivan Tashev

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

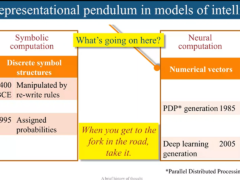

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

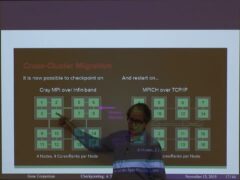

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-