News & features

Research Focus: Week of May 13, 2024

Welcome to Research Focus, a series of blog posts that highlights notable publications, events, code/datasets, new hires and other milestones from across the research community at Microsoft. Large language models (LLMs) have shown remarkable performance in generating text similar to…

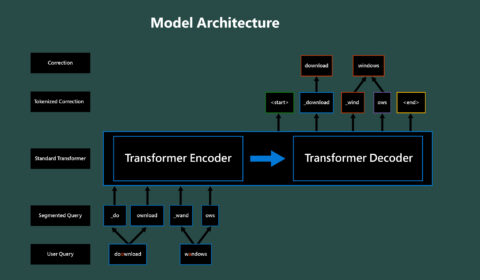

Speller100: Zero-shot spelling correction at scale for 100-plus languages

| Jingwen Lu, Jidong Long (龙继东), and Rangan Majumder

At Microsoft Bing, our mission is to delight users everywhere with the best search experience. We serve a diverse set of customers all over the planet who issue queries in over 100 languages. In search we’ve found about 15% of…

In the news | VentureBeat

Microsoft details Speller100, an AI system that checks spelling in over 100 languages

In a post on its AI research blog, Microsoft today detailed a new language system, Speller100, that the company claims is one of the most comprehensive ever made in terms of linguistic coverage and accuracy. Comprising a number of AI models…

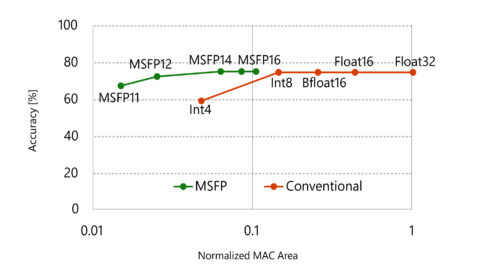

A Microsoft custom data type for efficient inference

| Bita Darvish Rouhani, Doug Burger, Eric Chung, Rangan Majumder, Sangeetha Shekar, Saurabh Tiwary, Sitaram Lanka, and Steve Reinhardt

AI is taking on an increasingly important role in many Microsoft products, such as Bing and Office 365. In some cases, it’s being used to power outward-facing features like semantic search in Microsoft Word or intelligent answers in Bing, and…

In the news | siliconANGLE

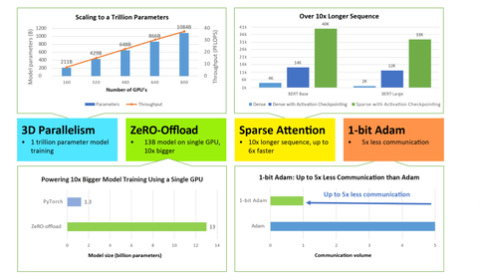

Microsoft AI tool enables ‘extremely large’ models with a trillion parameters

Microsoft Corp. has released a new version of its open-source DeepSpeed tool that it says will enable the creation of deep learning models with a trillion parameters, more than five times as many as in the world’s current largest model.

DeepSpeed: Extreme-scale model training for everyone

| DeepSpeed Team, Rangan Majumder, and Junhua Wang

In February, we announced DeepSpeed, an open-source deep learning training optimization library, and ZeRO (Zero Redundancy Optimizer), a novel memory optimization technology in the library, which vastly advances large model training by improving scale, speed, cost, and usability. DeepSpeed has…

ZeRO-2 & DeepSpeed: Shattering barriers of deep learning speed & scale

| DeepSpeed Team, Rangan Majumder, and Junhua Wang

In February, we announced DeepSpeed, an open-source deep learning training optimization library, and ZeRO (Zero Redundancy Optimizer), a novel memory optimization technology in the library, which vastly advances large model training by improving scale, speed, cost, and usability. DeepSpeed has…

In the news | WinBuzzer

Microsoft’s New Turing NLG is the Largest Transformer Language Model

Microsoft has developed a Transformer-based language generation model that it describes as the largest ever made. This week, Microsoft AI & Research announced Turing NLG, which is twice the size of its nearest competitor.

In the news | ITPro

Microsoft unveils ‘largest ever’ AI natural language model

Microsoft has revealed its largest deep learning language model, the Turing Natural Language Generation (T-NLG), which is claimed to have a record-breaking 17 billion parameters. The T-NLG, according to Microsoft, outperforms the largest deep learning models to date: the University of Washington’s Grover-Mega and Nvidia’s MegatronLM, which…