Microsoft Research blog

Research Collection: Tools and Data to Advance the State of the Art

“This is a game changer for the big data community. Initiatives like Microsoft Research Open Data reduce barriers to data sharing and encourage reproducibility by leveraging the power of cloud computing” —Sam Madden, Professor, Massachusetts Institute of Technology An open…

Research at Microsoft 2023: A year of groundbreaking AI advances and discoveries

AI saw unparalleled growth in 2023, reaching millions daily. This progress owes much to the extensive work of Microsoft researchers and collaborators. In this review, learn about the advances in 2023, which set the stage for further progress in 2024.

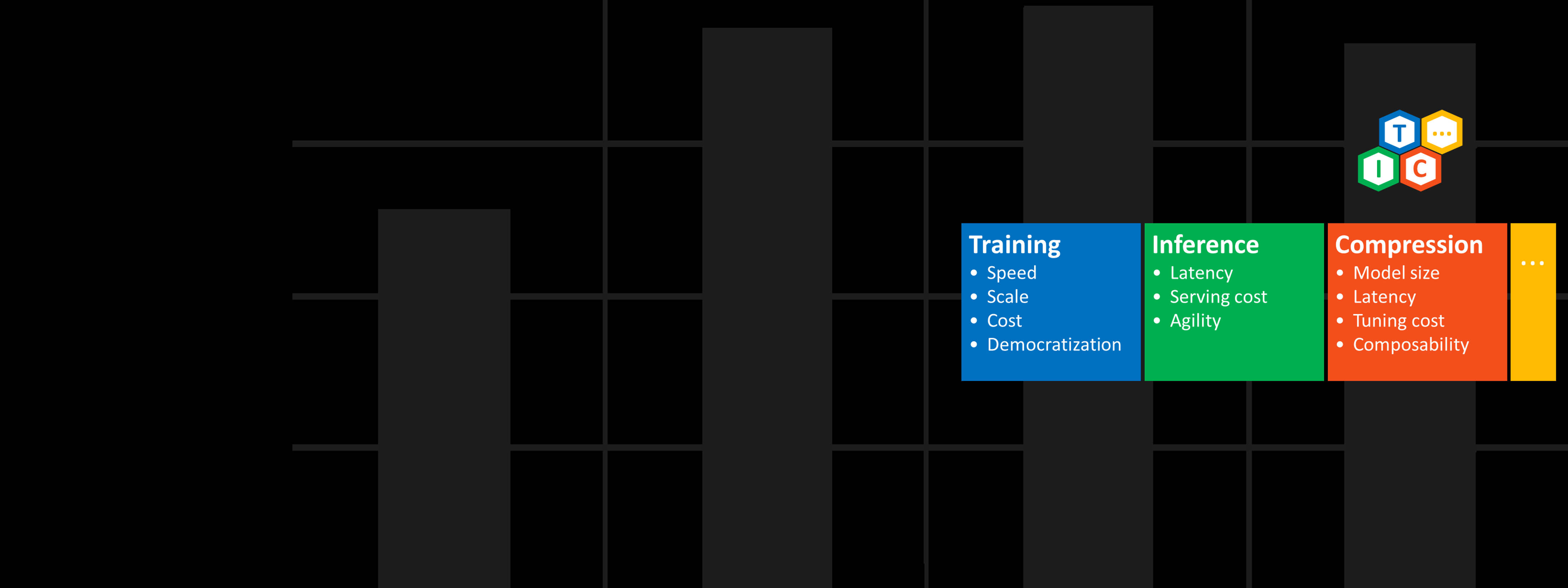

DeepSpeed ZeRO++: A leap in speed for LLM and chat model training with 4X less communication

| DeepSpeed Team and Andrey Proskurin

Large AI models are transforming the digital world. Generative language models like Turing-NLG, ChatGPT, and GPT-4, powered by large language models (LLMs), are incredibly versatile, capable of performing tasks like summarization, coding, and translation. Similarly, large multimodal generative models like…

ZeRO & DeepSpeed: New system optimizations enable training models with over 100 billion parameters

| DeepSpeed Team, Rangan Majumder, and Junhua Wang

The latest trend in AI is that larger natural language models provide better accuracy; however, larger models are difficult to train because of cost, time, and ease of code integration. Microsoft is releasing an open-source library called DeepSpeed, which vastly…

DeepSpeed powers 8x larger MoE model training with high performance

| DeepSpeed Team and Z-code Team

Today, we are proud to announce DeepSpeed MoE, a high-performance system that supports massive scale mixture of experts (MoE) models as part of the DeepSpeed (opens in new tab) optimization library. MoE models are an emerging class of sparsely activated…

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization

| DeepSpeed Team and Andrey Proskurin

Large-scale models are revolutionizing deep learning and AI research, driving major improvements in language understanding, generating creative texts, multi-lingual translation and many more. But despite their remarkable capabilities, the models’ large size creates latency and cost constraints that hinder the…

Tutel: An efficient mixture-of-experts implementation for large DNN model training

| Wei Cui, Yifan Xiong, Peng Cheng, and Rafael Salas

Mixture of experts (MoE) is a deep learning model architecture in which computational cost is sublinear to the number of parameters, making scaling easier. Nowadays, MoE is the only approach demonstrated to scale deep learning models to trillion-plus parameters, paving…